Meta: Leveling Up Craft

This case study recounts the development of a quality framework and heuristic evaluation program for Meta’s Infrastructure Org.

Background

I joined a rapidly growing org (from 60 to over 200) that supported varied products across: data infrastructure, privacy, developer products, and research infrastructure / analytics.

The team supported more than 150 active tools (and 1000+ inactive tools) and needed a way to reduce duplicative work and reduce ENG + design hours / cost spent fixing issues.

Quality guidelines needed to be in alignment with FTC consent standards, align with design system libraries, follow DAPR (data access privacy review) guidelines, and merge ENG implementation support with Infra UX design craft guidance

Challenges

Cross disciplinary teams were working under tight timelines. We needed to identify and resolve issues while minimizing negative impact on launch roadmaps.

To scale effectively, the org would need properly trained stewards for design and accessibility standards who could own downstream work.

〰️

〰️

?

〰️ 〰️ ?

Solution

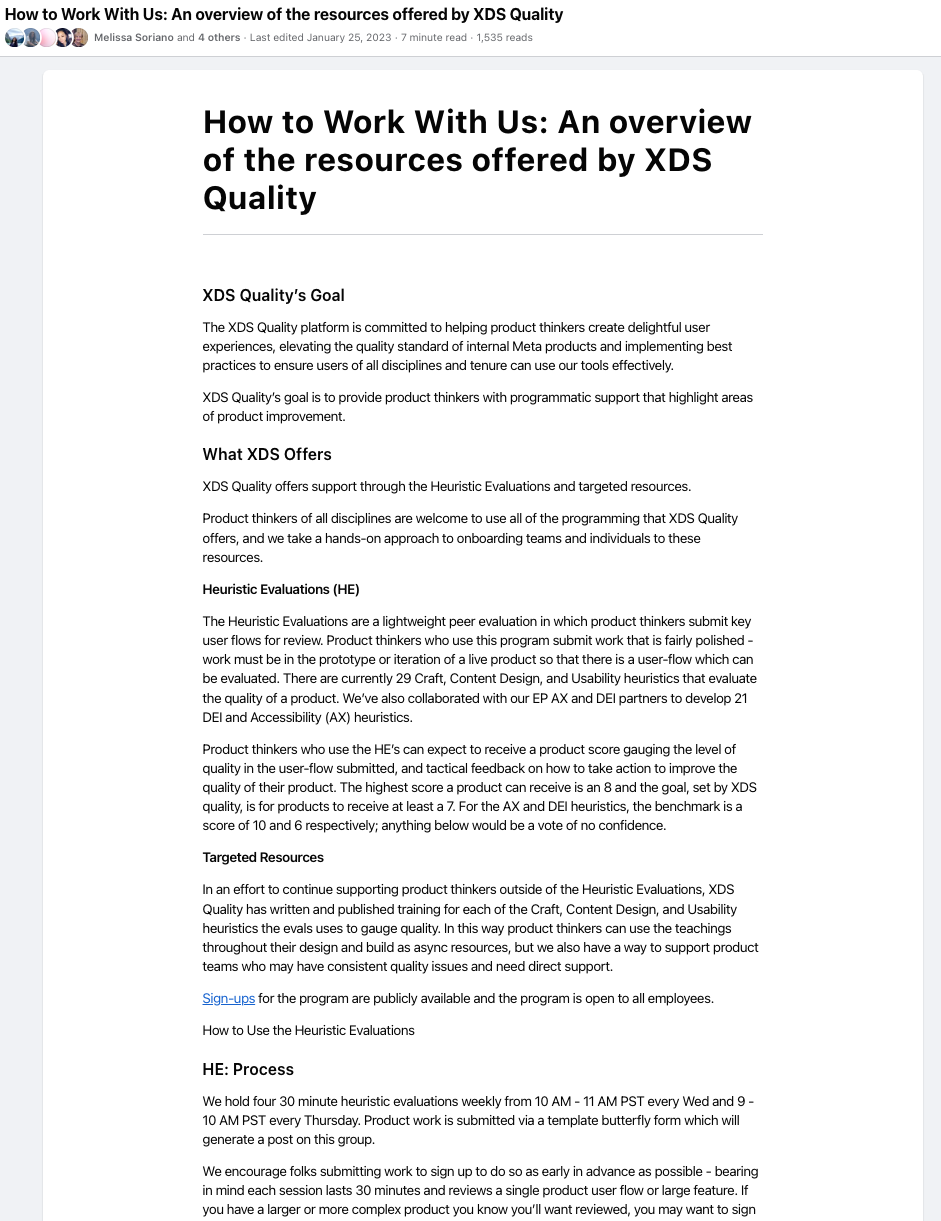

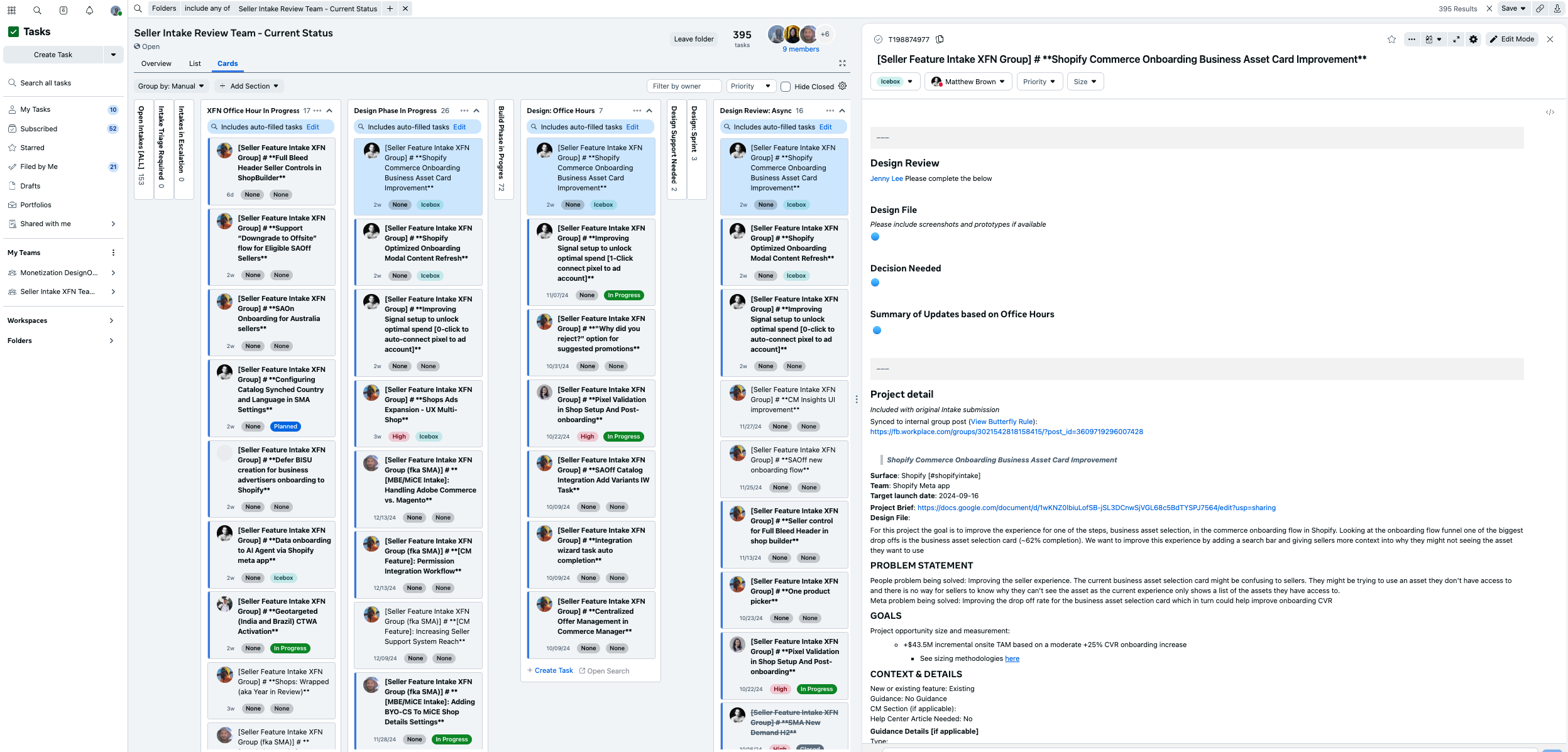

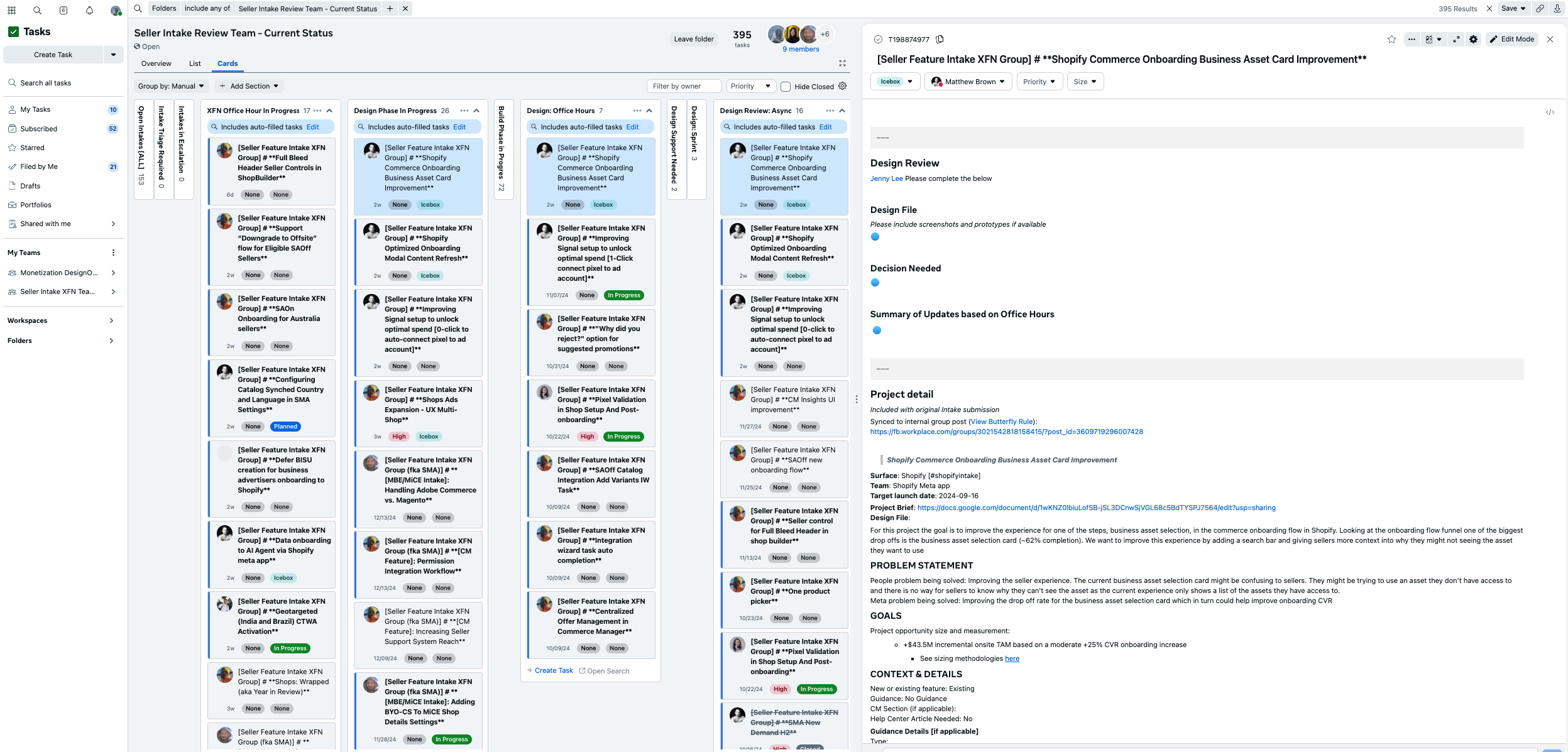

Coupled with a quarterly traffic control report I proposed and bid for the creation of a quality program that would evaluate the craft and accessibility of product work across design, engineering and research — but would include key leadership across critical pillars to identify early friction areas and clashing work.

I drove the creation of a 0-1 program that trained IC’s and managers across ENG, design, research and CD in quality evaluation and subsequently launched to the broader Infra Org and Enterprise Org (~400 total IC’s). This program expanded and I ultimately managed a team of 3 IC’s who’s role was managing and maintaining the branches of this core programming

Process:

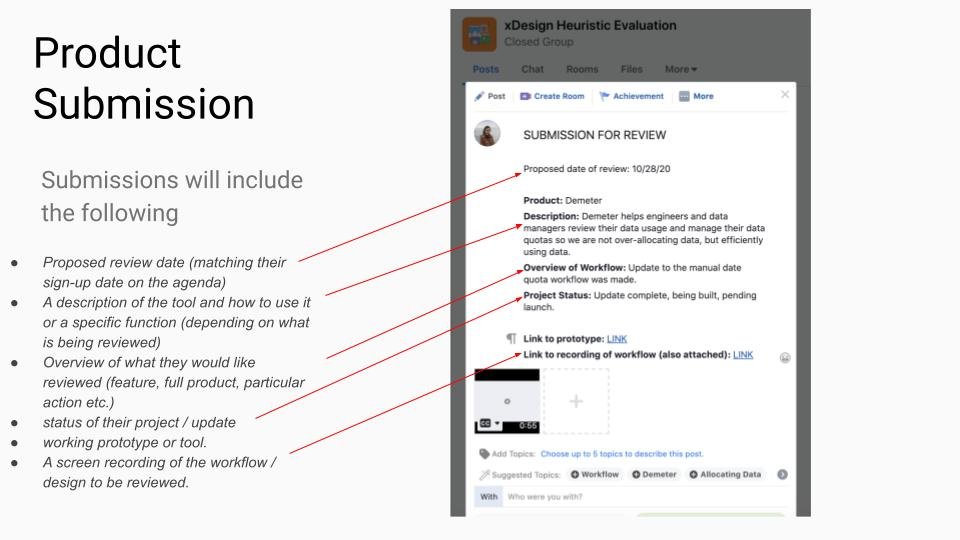

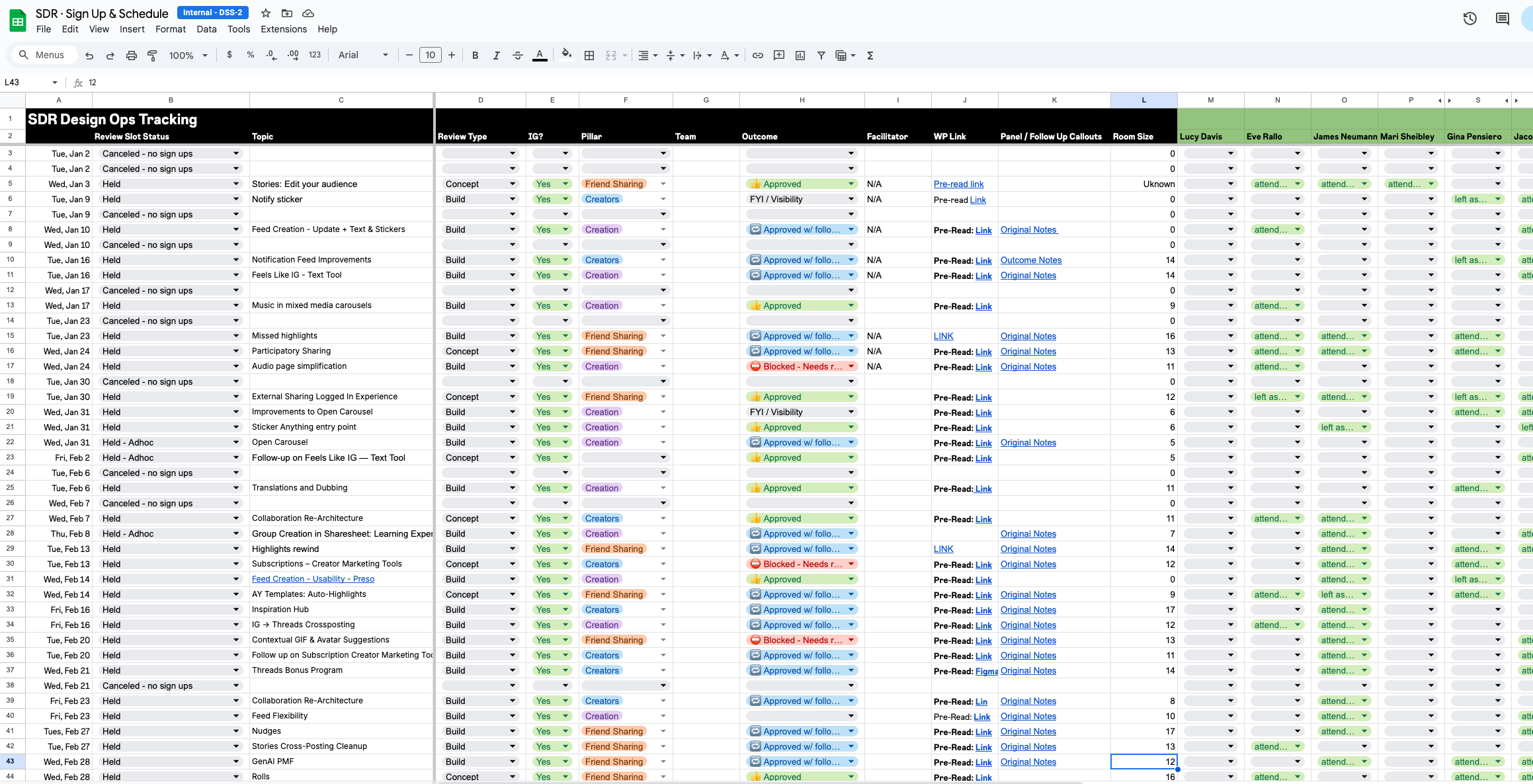

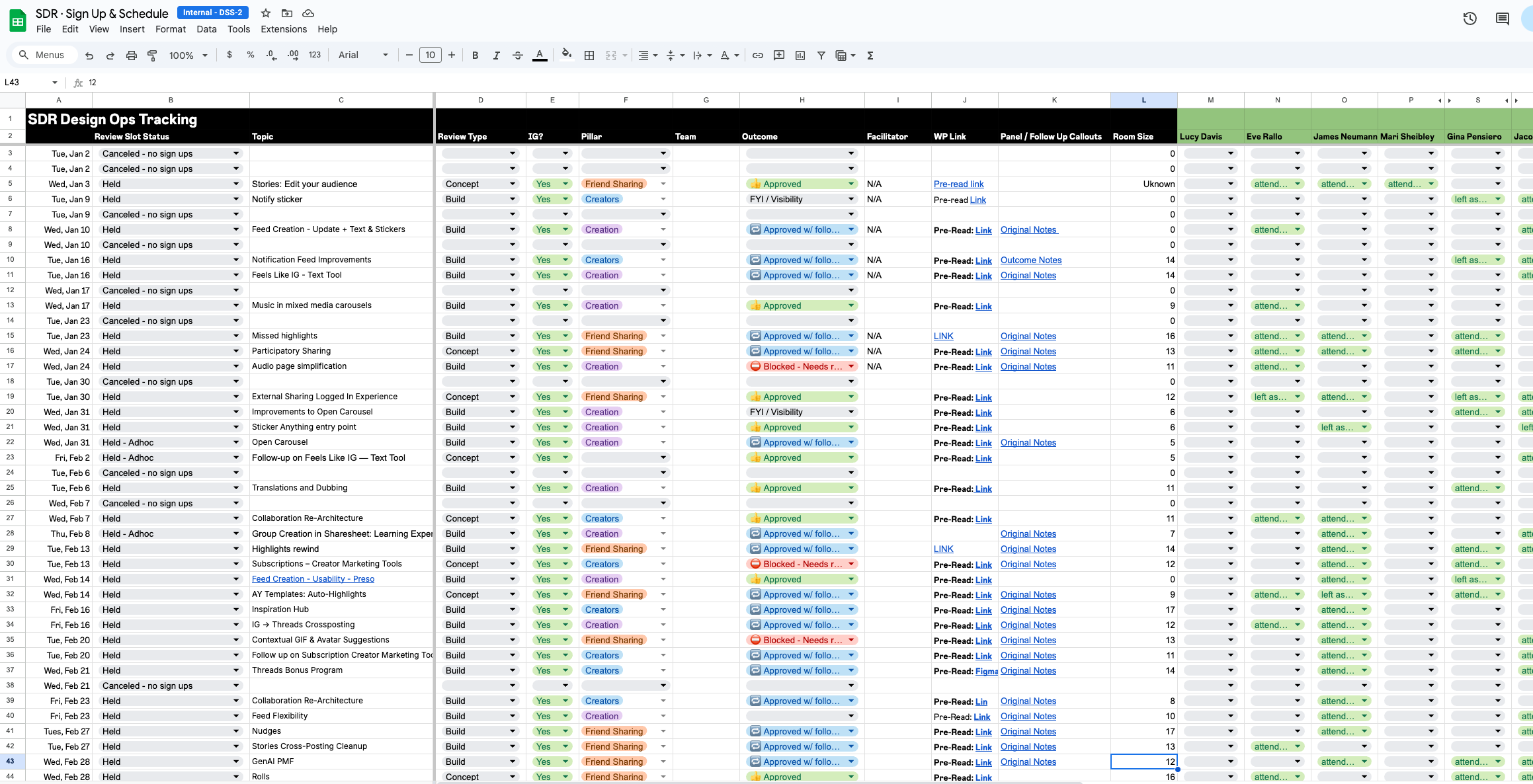

IC’s sign-up for one of 4 available review slots in a given week.

Sign-ups trigger automated messaging to the on-call reviewers we pre-review the content.

30 minute session is held during which reviewers primarily ask clarifying questions and discuss decision making in a given user-flow

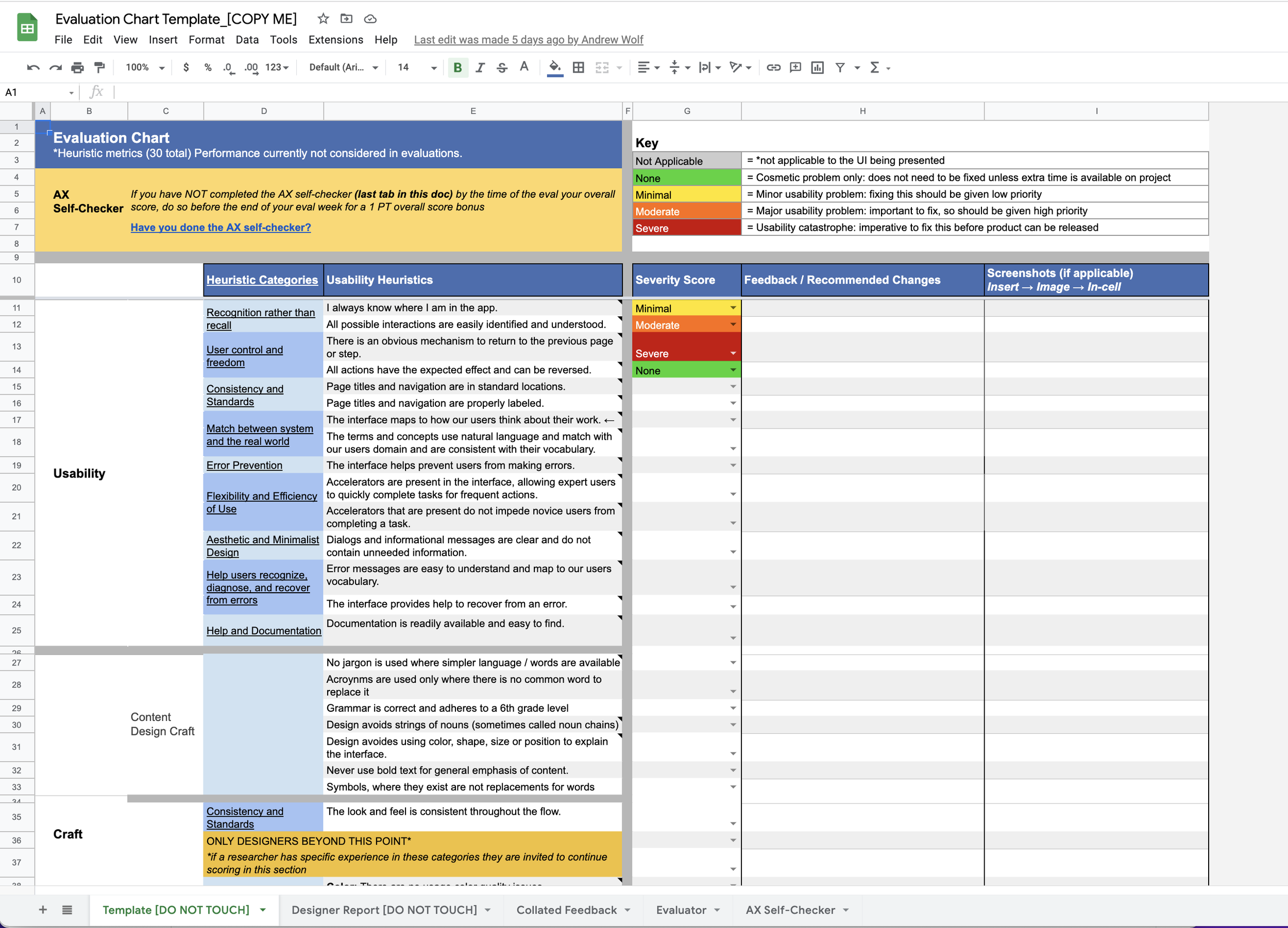

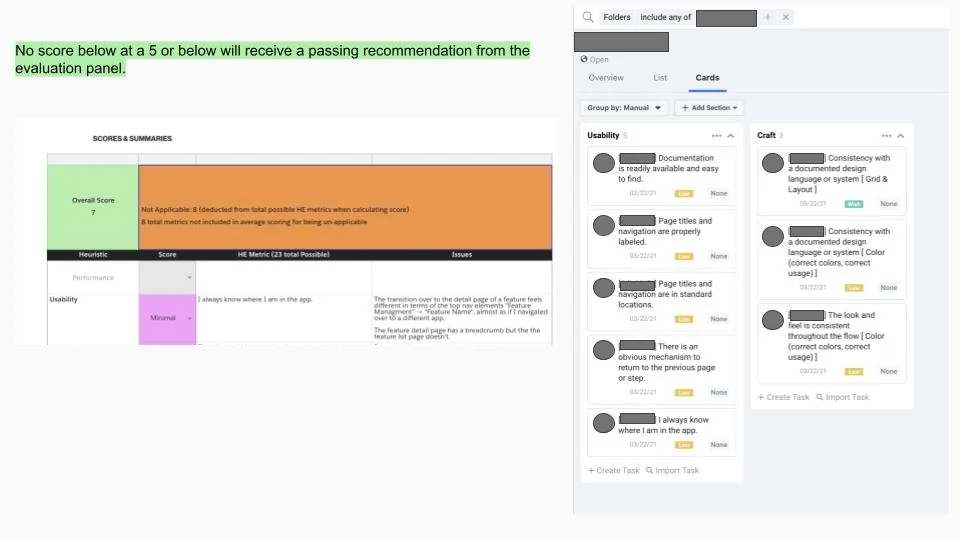

Feedback and scoring is done individually by each reviewer and collated by an operations rep.

The changes and quality-score are added to the auto-generated Task and sent back to the requester for them to address at a later date.

Quarterly reporting is shared with managers to assess the health of their products.

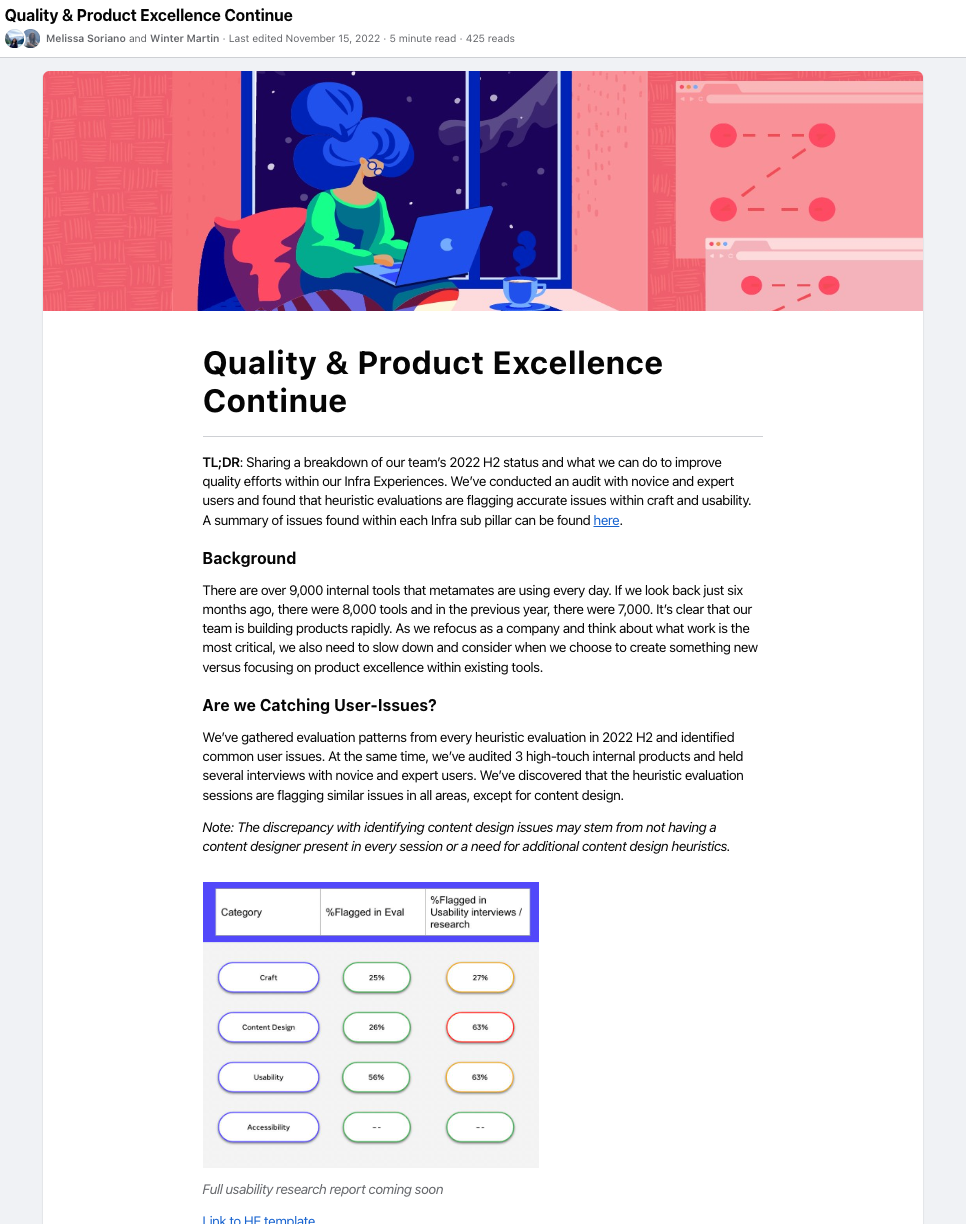

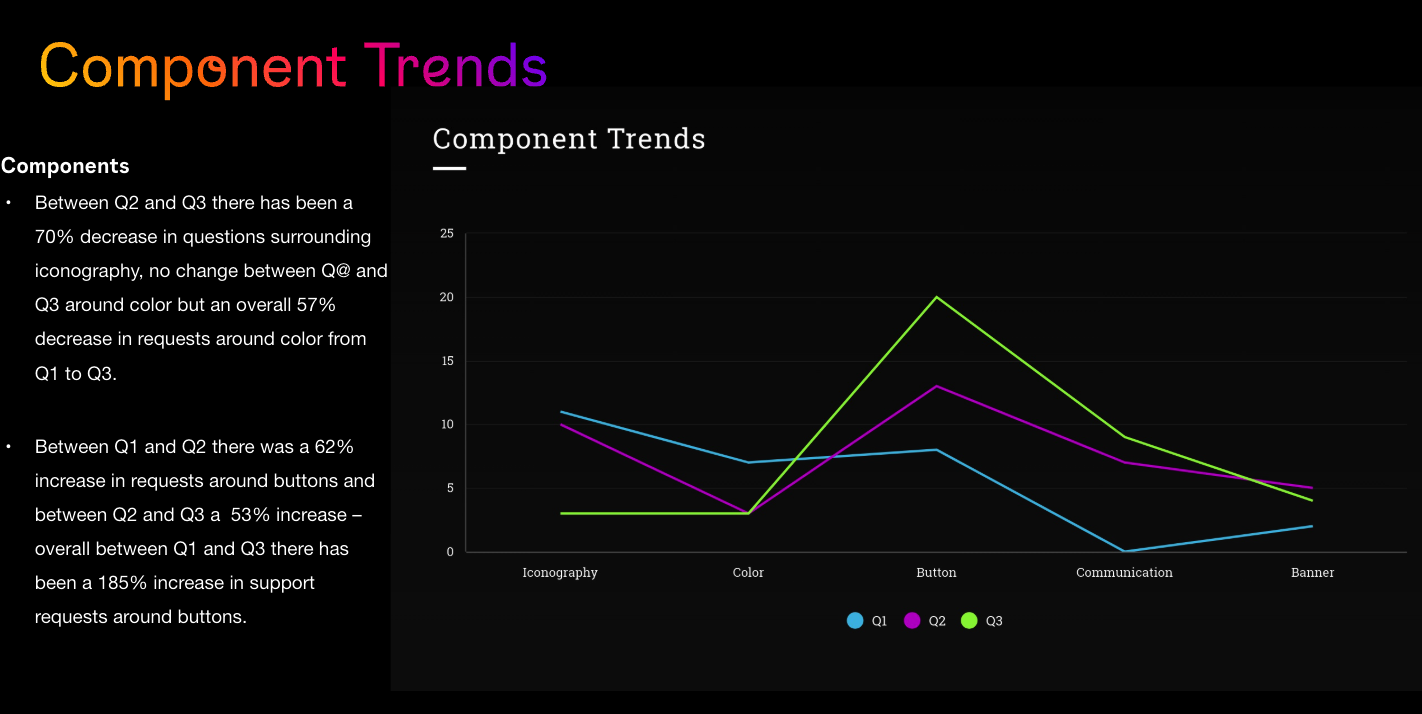

Half reporting is done publicly to share general data and org-tool trends.

Results

100% participation across the Infrastructure org

70% reduction in ENG and Design re-design/fix time post-launch

26% overall quality improvement (against established accessibility, craft, and performance heuristics)

Program expansion to the Enterprise product org and three additional programs developed + design review pillar guidance developed as a direct result of program guides.

Similar Work

IG Design Review

I ran and optimized the Instagram Design Review - Sharing pillar reviews.

Monetization [seller] Governance Program

I supported and iterated upon the monetization governance program (an internal cross collaboration program to improve the seller experience)

Other Meta Work

Process

-

Uncovering the root of the problem through in-depth investigation, research, and stakeholder collaboration to inform a clear path forward.

-

Engaging and aligning stakeholders by articulating value, creating buy-in, and fostering a shared vision for success.

-

Crafting and delivering tailored solutions through iterative design, execution, and hands-on collaboration.

-

Continuously improving outcomes by analyzing results, gathering feedback, and refining solutions for long-term impact.